Essential Data Analyst Tools Discover a List of The 17 Best Data Analysis Software & Tools On The Market

Top 17 Software & Tools for Data Analysts (2023)

Table of Content

1) What are data analyst tools?

2) The best 17 data analyst tools for 2023

3) Key takeaways & guidance

To be able to perform data analysis at the highest level possible, analysts and data professionals will use software that will ensure the best results in several tasks from executing algorithms, preparing data, generating predictions, and automating processes, to standard tasks such as visualising and reporting on the data. Although there are many of these solutions on the market, data analysts must choose wisely in order to benefit their analytical efforts. That said, in this article, we will cover the best data analyst tools and name the key features of each based on various types of analysis processes. But first, we will start with a basic definition and a brief introduction.

1) What Are Data Analyst Tools?

Data analyst tools is a term used to describe software and applications that data analysts use in order to develop and perform analytical processes that help companies to make better, informed business decisions while decreasing costs and increasing profits.

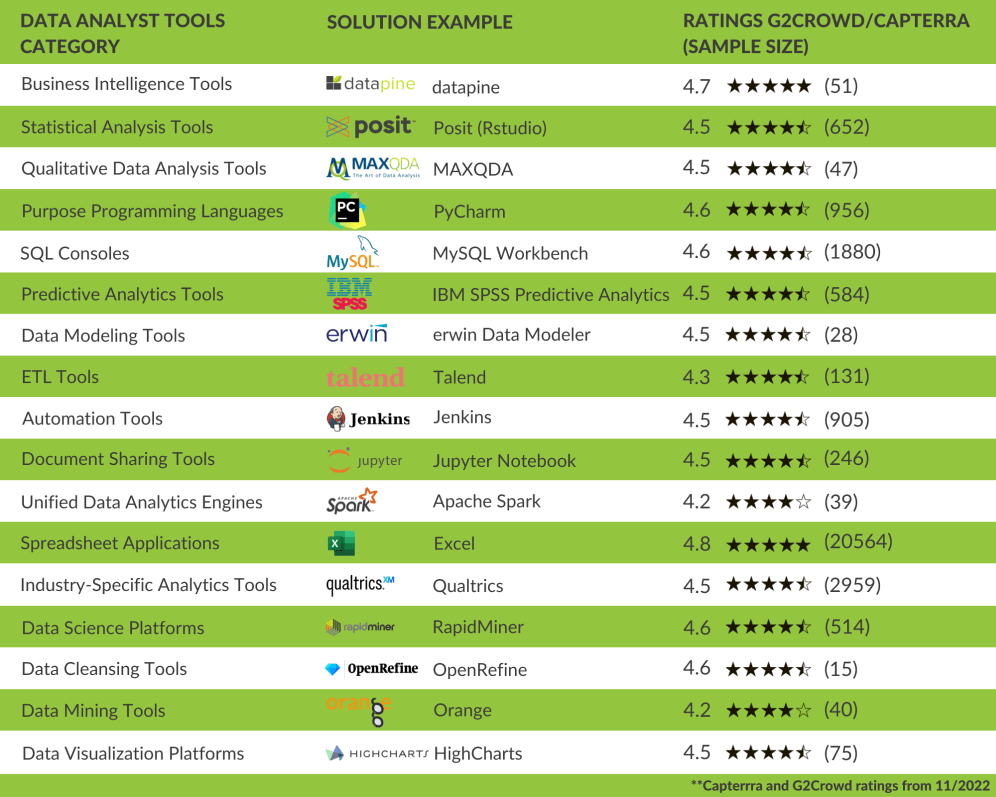

In order to make the best possible decision on which software you need to choose as an analyst, we have compiled a list of the top data analyst tools that have various focus and features, organised in software categories, and represented with an example of each. These examples have been researched and selected using rankings from two major software review sites: Capterra and G2Crowd. By looking into each of the software categories presented in this article, we selected the most successful solutions with a minimum of 15 reviews between both review websites until November 2022. The order in which these solutions are listed is completely random and does not represent a grading or ranking system.

2) What Tools Do Data Analysts Use?

To make the most out of the infinite number of software that is currently offered on the market, we will focus on the most prominent tools needed to be an expert data analyst. The image above provides a visual summary of all the areas and tools that will be covered in this insightful post. These data analysis tools are mostly focused on making analysts lives easier by providing them with solutions that make complex analytical tasks more efficient. Like this, they get more time to perform the analytical part of their job. Let’s get started with business intelligence tools.

1. Business intelligence tools

BI tools are one of the most represented means of performing data analysis. Specialising in business analytics, these solutions will prove to be beneficial for every data analyst that needs to analyse, monitor, and report on important findings. Features such as self-service, predictive analytics, and advanced SQL modes make these solutions easily adjustable to every level of knowledge, without the need for heavy IT involvement. By providing a set of useful features, analysts can understand trends and make tactical decisions. Our data analytics tools article wouldn’t be complete without business intelligence, and datapine is one example that covers most of the requirements both for beginner and advanced users. This all-in-one tool aims to facilitate the entire analysis process from data integration and discovery to reporting.

datapine

KEY FEATURES:

Visual drag-and-drop interface to build SQL queries automatically, with the option to switch to, advanced (manual) SQL mode

Powerful predictive analytics features, interactive charts and dashboards, and automated reporting

AI-powered alarms that are triggered as soon as an anomaly occurs or a goal is met

datapine is a popular business intelligence software with an outstanding rating of 4.8 stars in Capterra and 4.6 stars in G2Crowd. It focuses on delivering simple, yet powerful analysis features into the hands of beginners and advanced users in need of a fast and reliable online data analysis solution for all analysis stages. An intuitive user interface will enable you to simply drag-and-drop your desired values into datapine’s Analyzer and create numerous charts and graphs that can be united into an interactive dashboard. If you’re an experienced analyst, you might want to consider the SQL mode where you can build your own queries or run existing codes or scripts. Another crucial feature is the predictive analytics forecast engine that can analyse data from multiple sources which can be previously integrated with their various data connectors. While there are numerous predictive solutions out there, datapine provides simplicity and speed at its finest. By simply defining the input and output of the forecast based on specified data points and desired model quality, a complete chart will unfold together with predictions.

We should also mention robust artificial intelligence that is becoming an invaluable assistant in today’s analysis processes. Neural networks, pattern recognition, and threshold alerts will alarm you as soon as a business anomaly occurs or a previously set goal is met so you don’t have to manually analyse large volumes of data – the data analytics software does it for you. Access your data from any device with an internet connection, and share your findings easily and securely via dashboards or customised reports for anyone that needs quick answers to any type of business question.

2. Statistical Analysis Tools

Next in our list of data analytics tools comes a more technical area related to statistical analysis. Referring to computation techniques that often contain a variety of statistical techniques to manipulate, explore, and generate insights, there exist multiple programming languages to make (data) scientists’ work easier and more effective. With the expansion of various languages that are today present on the market, science has its own set of rules and scenarios that need special attention when it comes to statistical data analysis and modelling. Here we will present one of the most popular tools for a data analyst – Posit (previously known as RStudio or R programming). Although there are other languages that focus on (scientific) data analysis, R is particularly popular in the community.

POSIT (R-STUDIO)

KEY FEATURES:

An ecosystem of more than 10 000 packages and extensions for distinct types of data analysis

Statistical analysis, modelling, and hypothesis testing (e.g. analysis of variance, t test, etc.)

Active and communicative community of researchers, statisticians, and scientists

Posit, formerly known as RStudio, is one of the top data analyst tools for R and Python. Its development dates back to 2009 and it’s one of the most used software for statistical analysis and data science, keeping an open-source policy and running on a variety of platforms, including Windows, macOS and Linux. As a result of the latest rebranding process, some of the famous products on the platform will change their names, while others will stay the same. For example, RStudio Workbench and RStudio Connect will now be known as Posit Workbench and Posit Connect respectively. On the other side, products like RStudio Desktop and RStudio Server will remain the same. As stated on the software’s website, the rebranding happened because the name RStudio no longer reflected the variety of products and languages that the platform currently supports.

Posit is by far the most popular integrated development environment (IDE) out there with 4,7 stars on Capterra and 4,5 stars on G2Crowd. Its capabilities for data cleaning, data reduction, and data analysis report output with R markdown, make this tool an invaluable analytical assistant that covers both general and academic data analysis. It is compiled of an ecosystem of more than 10 000 packages and extensions that you can explore by categories, and perform any kind of statistical analysis such as regression, conjoint, factor cluster analysis, etc. Easy to understand for those that don’t have a high-level of programming skills, Posit can perform complex mathematical operations by using a single command. A number of graphical libraries such as ggplot and plotly make this language different than others in the statistical community since it has efficient capabilities to create quality visualisations.

Posit was mostly used in the academic area in the past, today it has applications across industries and large companies such as Google, Facebook, Twitter, and Airbnb, among others. Due to an enormous number of researchers, scientists, and statisticians using it, the tool has an extensive and active community where innovative technologies and ideas are presented and communicated regularly.

3. QUALITATIVE DATA ANALYSIS TOOLS

Naturally, when we think about data, our mind automatically takes us to numbers. Although much of the extracted data might be in a numeric format, there is also immense value in collecting and analysing non-numerical information, especially in a business context. This is where qualitative data analysis tools come into the picture. These solutions offer researchers, analysts, and businesses the necessary functionalities to make sense of massive amounts of qualitative data coming from different sources such as interviews, surveys, e-mails, customer feedback, social media comments, and much more depending on the industry. There is a wide range of qualitative analysis software out there, the most innovative ones rely on artificial intelligence and machine learning algorithms to make the analysis process faster and more efficient. Today, we will discuss MAXQDA, one of the most powerful QDA platforms in the market.

MAXQDA

KEY FEATURES:

The possibility to mark important information using codes, colours, symbols or emojis

AI-powered audio transcription capabilities such as speed and rewind controls, speaker labels, and others

Possibility to work with multiple languages and scripts thanks to Unicode support

Founded in 1989 “by researchers, for researchers”, MAXQDA is a qualitative data analysis software for Windows and Mac that assists users in organising and interpreting qualitative data from different sources with the help of innovative features. Unlike some other solutions on the same range, MAXQDA supports a wide range of data sources and formats. Users can import traditional text data from interviews, focus groups, web pages, and YouTube or Twitter comments, as well as various types of multimedia data such as videos or audio files. Paired to that, the software also offers a Mixed Methods tool which allows users to use both qualitative and quantitative data for a more complete analytics process. This level of versatility has earned MAXQDA worldwide recognition for many years. The tool has a positive 4.6 stars rating in Capterra and a 4.5 in G2Crowd.

Amongst its most valuable functions, MAXQDA offers users the capability of setting different codes to mark their most important data and organise it in an efficient way. Codes can be easily generated via drag & drop and labelled using colours, symbols, or emojis. Your findings can later be transformed, automatically or manually, into professional visualisations and exported in various readable formats such as PDF, Excel, or Word, among others.

4. General-purpose programming languages

Programming languages are used to solve a variety of data problems. We have explained R and statistical programming, now we will focus on general ones that use letters, numbers, and symbols to create programs and require formal syntax used by programmers. Often, they’re also called text-based programs because you need to write software that will ultimately solve a problem. Examples include C#, Java, PHP, Ruby, Julia, and Python, among many others on the market. Here we will focus on Python and we will present PyCharm as one of the best tools for data analysts that have coding knowledge as well.

PYCHARM

KEY FEATURES:

Intelligent code inspection and completion with error detection, code fixes, and automated code refractories

Built-in developer tools for smart debugging, testing, profiling, and deployment

Cross-technology development supporting JavaScript, CoffeeScript, HTML/CSS, Node.js, and more

PyCharm is an integrated development environment (IDE) by JetBrains designed for developers that want to write better, more productive Python code from a single platform. The tool, which is successfully rated with 4.7 stars on Capterra and 4.6 in G2Crowd, offers developers a range of essential features including an integrated visual debugger, GUI-based test runner, integration with major VCS and built-in database tools, and much more. Amongst its most praised features, the intelligent code assistance provides developers with smart code inspections highlighting errors and offering quick fixes and code completions.

PyCharm supports the most important Python implementations including Python 2.x and 3.x, Jython, IronPython, PyPy and Cython, and it is available in three different editions. The Community version, which is free and open-sourced, the Professional paid version, including all advanced features, and the Edu version which is also free and open-sourced for educational purposes. Definitely, one of the best Python data analyst tools in the market.

5. SQL consoles

Our data analyst tools list wouldn’t be complete without SQL consoles. Essentially, SQL is a programming language that is used to manage/query data held in relational databases, particularly effective in handling structured data as a database tool for analysts. It’s highly popular in the data science community and one of the analyst tools used in various business cases and data scenarios. The reason is simple: as most of the data is stored in relational databases and you need to access and unlock its value, SQL is a highly critical component of succeeding in business, and by learning it, analysts can offer a competitive advantage to their skillset. There are different relational (SQL-based) database management systems such as MySQL, PostgreSQL, MS SQL, and Oracle, for example, and by learning these data analysts’ tools would prove to be extremely beneficial to any serious analyst. Here we will focus on MySQL Workbench as the most popular one.

MySQL Workbench

KEY FEATURES:

A unified visual tool for data modelling, SQL development, administration, backup, etc.

Instant access to database schema and objects via the Object Browser

SQL Editor that offers colour syntax highlighting, reuse of SQL snippets, and execution history

MySQL Workbench is used by analysts to visually design, model, and manage databases, optimise SQL queries, administer MySQL environments, and utilise a suite of tools to improve the performance of MySQL applications. It will allow you to perform tasks such as creating and viewing databases and objects (triggers or stored procedures, e.g.), configuring servers, and much more. You can easily perform backup and recovery as well as inspect audit data. MySQL Workbench will also help in database migration and is a complete solution for analysts working in relational database management and companies that need to keep their databases clean and effective. The tool, which is very popular amongst analysts and developers, is rated 4.6 stars in Capterra and 4.5 in G2Crowd.

6. Standalone predictive analytics tools

Predictive analytics is one of the advanced techniques, used by analysts that combine data mining, machine learning, predictive modelling, and artificial intelligence to predict future events, and it deserves a special place in our list of data analysis tools as its popularity has increased in recent years with the introduction of smart solutions that enabled analysts to simplify their predictive analytics processes. You should keep in mind that some BI tools we already discussed in this list offer easy to use, built-in predictive analytics solutions but, in this section, we focus on standalone, advanced predictive analytics that companies use for various reasons, from detecting fraud with the help of pattern detection to optimising marketing campaigns by analysing consumers’ behaviour and purchases. Here we will list a data analysis software that is helpful for predictive analytics processes and helps analysts to predict future scenarios.

IBM SPSS PREDICTIVE ANALYTICS ENTERPRISE

KEY FEATURES:

A visual predictive analytics interface to generate predictions without code

Can be integrated with other IBM SPSS products for a complete analysis scope

Flexible deployment to support multiple business scenarios and system requirements

IBM SPSS Predictive Analytics provides enterprises with the power to make improved operational decisions with the help of various predictive intelligence features such as in-depth statistical analysis, predictive modelling, and decision management. The tool offers a visual interface for predictive analytics that can be easily used by average business users with no previous coding knowledge, while still providing analysts and data scientists with more advanced capabilities. Like this, users can take advantage of predictions to inform important decisions in real time with a high level of certainty.

Additionally, the platform provides flexible deployment options to support multiple scenarios, business sizes and use cases. For example, for supply chain analysis or cybercrime prevention, among many others. Flexible data integration and manipulation is another important feature included in this software. Unstructured and structured data, including text data, from multiple sources, can be analysed for predictive modelling that will translate into intelligent business outcomes.

As a part of the IBM product suite, users of the tool can take advantage of other solutions and modules such as the IBM SPSS Modeler, IBM SPSS Statistics, and IMB SPSS Analytic Server for a complete analytical scope. Reviewers gave the software a 4.5 star rating on Capterra and 4.2 on G2Crowd.

7. Data modelling tools

Our list of data analysis tools wouldn’t be complete without data modelling. Creating models to structure the database, and design business systems by utilising diagrams, symbols, and text, ultimately represent how the data flows and is connected in between. Businesses use data modelling tools to determine the exact nature of the information they control and the relationship between datasets, and analysts are critical in this process. If you need to discover, analyse, and specify changes in information that is stored in a software system, database or other application, chances are your skills are critical for the overall business. Here we will show one of the most popular data analyst software used to create models and design your data assets.

erwin data modeler (DM)

KEY FEATURES:

Automated data model generation to increase productivity in analytical processes

Single interface no matter the location or the type of the data

5 different versions of the solution you can choose from and adjust based on your business needs

erwin DM works both with structured and unstructured data in a data warehouse and in the cloud. It’s used to “find, visualise, design, deploy and standardise high-quality enterprise data assets,” as stated on their official website. erwin can help you reduce complexities and understand data sources to meet your business goals and needs. They also offer automated processes where you can automatically generate models and designs to reduce errors and increase productivity. This is one of the tools for analysts that focus on the architecture of the data and enable you to create logical, conceptual, and physical data models.

Additional features such as a single interface for any data you might possess, no matter if it’s structured or unstructured, in a data warehouse or the cloud makes this solution highly adjustable for your analytical needs. With 5 versions of the erwin data modeler, their solution is highly adjustable for companies and analysts that need various data modelling features. This versatility is reflected in its positive reviews, gaining the platform an almost perfect 4.8 star rating on Capterra and 4.3 stars in G2Crowd.

8. ETL tools

ETL is a process used by companies, no matter the size, across the world, and if a business grows, chances are you will need to extract, load, and transform data into another database to be able to analyse it and build queries. There are some core types of ETL tools for data analysts such as batch ETL, real-time ETL, and cloud-based ETL, each with its own specifications and features that adjust to different business needs. These are the tools used by analysts that take part in more technical processes of data management within a company, and one of the best examples is Talend.

Talend

KEY FEATURES:

Collecting and transforming data through data preparation, integration, cloud pipeline designer

Talend Trust Score to ensure data governance and resolve quality issues across the board

Sharing data internally and externally through comprehensive deliveries via APIs

Talend is a data integration platform used by experts across the globe for data management processes, cloud storage, enterprise application integration, and data quality. It’s a Java-based ETL tool that is used by analysts in order to easily process millions of data records and offers comprehensive solutions for any data project you might have. Talend’s features include (big) data integration, data preparation, cloud pipeline designer, and stitch data loader to cover multiple data management requirements of an organisation. Users of the tool rated it with 4.2 stars in Capterra and 4.3 in G2Crowd. This is an analyst software extremely important if you need to work on ETL processes in your analytical department.

Apart from collecting and transforming data, Talend also offers a data governance solution to build a data hub and deliver it through self-service access through a unified cloud platform. You can utilise their data catalogue, inventory and produce clean data through their data quality feature. Sharing is also part of their data portfolio; Talend’s data fabric solution will enable you to deliver your information to every stakeholder through a comprehensive API delivery platform. If you need a data analyst tool to cover ETL processes, Talend might be worth considering.

9. Automation Tools

As mentioned, the goal of all the solutions present on this list is to make data analysts lives easier and more efficient. Taking that into account, automation tools could not be left out of this list. In simple words, data analytics automation is the practice of using systems and processes to perform analytical tasks with almost no human interaction. In the past years, automation solutions have impacted the way analysts perform their jobs as these tools assist them in a variety of tasks such as data discovery, preparation, data replication, and more simple ones like report automation or writing scripts. That said, automating analytical processes significantly increases productivity, leaving more time to perform more important tasks. We will see this more in detail through Jenkins one of the leaders in open-source automation software.

JENKINS

KEY FEATURES:

Popular continuous integration (CI) solution with advanced automation features such as running code in multiple platforms

Job automations to set up customised tasks can be scheduled or based on a specific event

Several job automation plugins for different purposes such as Jenkins Job Builder, Jenkins Job DLS or Jenkins Pipeline DLS

Developed in 2004 under the name Hudson, Jenkins is an open-source CI automation server that can be integrated with several DevOps tools via plugins. By default, Jenkins assists developers to automate parts of their software development process like building, testing, and deploying. However, it is also highly used by data analysts as a solution to automate jobs such as running codes and scripts daily or when a specific event happened. For example, run a specific command when new data is available.

There are several Jenkins plugins to generate jobs automatically. For example, the Jenkins Job Builder plugin takes simple descriptions of jobs in YAML or JSON format and turns them into runnable jobs in Jenkins’s format. On the other side, the Jenkins Job DLS plugin provides users with the capabilities to easily generate jobs from other jobs and edit the XML configuration to supplement or fix any existing elements in the DLS. Lastly, the Pipeline plugin is mostly used to generate complex automated processes.

For Jenkins, automation is not useful if it’s not tight to integration. For this reason, they provide hundreds of plugins and extensions to integrate Jenkins with your existing tools. This way, the entire process of code generation and execution can be automated at every stage and in different platforms - leaving you enough time to perform other relevant tasks. All the plugins and extensions from Jenkins are developed in Java meaning the tool can also be installed in any other operator that runs on Java. Users rated Jenkins with 4.5 stars in Capterra and 4.4 stars in G2Crowd.

10. DOCUMENT SHARING TOOLS

As an analyst working with programming, it is very likely that you have found yourself in the situation of having to share your code or analytical findings with others. Rather you want someone to look into your code for errors or provide any other kind of feedback to your work, a document sharing tool is the way to go. These solutions enable users to share interactive documents which can contain live code and other multimedia elements for a collaborative process. Below, we will present Jupyter Notebook, one of the most popular and efficient platforms for this purpose.

JUPYTER NOTEBOOK

KEY FEATURES:

Supports 40 programming languages including Python, R, Julia, C++, and more

Easily share notebooks with others via email, Dropbox, GitHub and Jupyter Notebook Viewer

In-browser editing for code, with automatic syntax highlighting, indentation, and tab completion

Jupyter Notebook is an open source web based interactive development environment used to generate and share documents called notebooks, containing live codes, data visualisations, and text in a simple and streamlined way. Its name is an abbreviation of the core programming languages it supports: Julia, Python, and R and, according to its website, it has a flexible interface that enables users to view, execute and share their code all in the same platform. Notebooks allow analysts, developers, and anyone else to combine code, comments, multimedia, and visualisations in an interactive document that can be easily shared and reworked directly in your web browser.

Even though it works by default on Python, Jupyter Notebook supports over 40 programming languages and it can be used in multiple scenarios. Some of them include sharing notebooks with interactive visualisations, avoiding the static nature of other software, live documentation to explain how specific Python modules or libraries work, or simply sharing code and data files with others. Notebooks can be easily converted into different output formats such as HTML, LaTeX, PDF, and more. This level of versatility has earned the tool 4.7 stars rating on Capterra and 4.5 in G2Crowd.

11. Unified data analytics engines

If you work for a company that produces massive datasets and needs a big data management solution, then unified data analytics engines might be the best resolution for your analytical processes. To be able to make quality decisions in a big data environment, analysts need tools that will enable them to take full control of their company’s robust data environment. That’s where machine learning and AI play a significant role. That said, Apache Spark is one of the data analysis tools on our list that supports big-scale data processing with the help of an extensive ecosystem.

Apache Spark

KEY FEATURES:

High performance: Spark owns the record in the large-scale data processing

A large ecosystem of data frames, streaming, machine learning, and graph computation

Perform Exploratory Analysis on petabyte-scale data without the need for downsampling

Apache Spark was originally developed by UC Berkeley in 2009 and since then, it has expanded across industries and companies such as Netflix, Yahoo, and eBay that have deployed Spark, processed petabytes of data and proved that Apache is the go-to solution for big data management, earning it a positive 4.2 star rating in both Capterra and G2Crowd. Their ecosystem consists of Spark SQL, streaming, machine learning, graph computation, and core Java, Scala, and Python APIs to ease the development. Already in 2014, Spark officially set a record in large-scale sorting. Actually, the engine can be 100x faster than Hadoop and this is one of the features that is extremely crucial for massive volumes of data processing.

You can easily run applications in Java, Python, Scala, R, and SQL while more than 80 high-level operators that Spark offers will make your data transformation easy and effective. As a unified engine, Spark comes with support for SQL queries, MLlib for machine learning and GraphX for streaming data that can be combined to create additional, complex analytical workflows. Additionally, it runs on Hadoop, Kubernetes, Apache Mesos, standalone or in the cloud and can access diverse data sources. Spark is truly a powerful engine for analysts that need support in their big data environment.

12. Spreadsheet applications

Spreadsheets are one of the most traditional forms of data analysis. Quite popular in any industry, business or organisation, there is a slim chance that you haven’t created at least one spreadsheet to analyse your data. Often used by people that don’t have high technical abilities to code themselves, spreadsheets can be used for fairly easy analysis that doesn’t require considerable training, complex and large volumes of data and databases to manage. To look at spreadsheets in more detail, we have chosen Excel as one of the most popular in business.

Excel

KEY FEATURES:

Part of the Microsoft Office family, hence, it’s compatible with other Microsoft applications

Pivot tables and building complex equations through designated rows and columns

Perfect for smaller analysis processes through workbooks and quick sharing

With 4.8 stars rating in Capterra and 4.7 in G2Crowd, Excel needs a category on its own since this powerful tool has been in the hands of analysts for a very long time. Often considered a traditional form of analysis, Excel is still widely used across the globe. The reasons are fairly simple: there aren’t many people who have never used it or come across it at least once in their career. It’s a fairly versatile data analyst tool where you simply manipulate rows and columns to create your analysis. Once this part is finished, you can export your data and send it to the desired recipients, hence, you can use Excel as a reporting tool as well. You do need to update the data on your own, Excel doesn’t have an automation feature similar to other tools on our list. Creating pivot tables, managing smaller amounts of data and tinkering with the tabular form of analysis, Excel has developed as an electronic version of the accounting worksheet to one of the most spread tools for data analysts.

A wide range of functionalities accompany Excel, from arranging to manipulating, calculating and evaluating quantitative data to building complex equations and using pivot tables, conditional formatting, adding multiple rows and creating charts and graphs – Excel has definitely earned its place in traditional data management.

13. Industry-specific analytics tools

While there are many data analysis tools on this list that are used in various industries and are applied daily in analysts’ workflow, there are solutions that are specifically developed to accommodate a single industry and cannot be used in another. For that reason, we have decided to include of one these solutions on our list, although there are many others, industry-specific data analysis programs and software. Here we focus on Qualtrics as one of the leading research software that is used by over 11000 world’s brands and has over 2M users across the globe as well as many industry-specific features focused on market research.

QUALTRICS

KEY FEATURES:

5 main experience features: design, customer, brand, employee, and product

Additional research services by their in-house experts

Advanced statistical analysis with their Stats iQ analysis tool

Qualtrics is a software for data analysis that is focused on experience management (XM) and is used for market research by companies across the globe. The tool, which has a positive 4.8 stars rating on Capterra and 4.4 in G2Crowd, offers 5 product pillars for enterprise XM which include design, customer, brand, employee, and product experiences, as well as additional research services performed by their own experts. Their XM platform consists of a directory, automated actions, Qualtrics iQ tool, and platform security features that combine automated and integrated workflows into a single point of access. That way, users can refine each stakeholder’s experience and use their tool as an “ultimate listening system.”

Since automation is becoming increasingly important in our data-driven age, Qualtrics has also developed drag-and-drop integrations into the systems that companies already use such as CRM, ticketing, or messaging, while enabling users to deliver automatic notifications to the right people. This feature works across brand tracking and product feedback as well as customer and employee experience. Other critical features such as the directory where users can connect data from 130 channels (including web, SMS, voice, video, or social), and Qualtrics iQ to analyse unstructured data will enable users to utilise their predictive analytics engine and build detailed customer journeys. If you’re looking for a data analytic software that needs to take care of market research of your company, Qualtrics is worth the try.

14. Data science platforms

Data science can be used for most software solutions on our list, but it does deserve a special category since it has developed into one of the most sought-after skills of the decade. No matter if you need to utilise preparation, integration or data analyst reporting tools, data science platforms will probably be high on your list for simplifying analytical processes and utilising advanced analytics models to generate in-depth data science insights. To put this into perspective, we will present RapidMiner as one of the top data analyst software that combines deep but simplified analysis.

RapidMiner

KEY FEATURES:

A comprehensive data science and machine learning platform with 1500+ algorithms and functions

Possible to integrate with Python and R as well as support for database connections (e.g. Oracle)

Advanced analytics features for descriptive and prescriptive analytics

RapidMiner, which was just acquired by Altair in 2022 as a part of their data analytics portfolio, is a tool used by data scientists across the world to prepare data, utilise machine learning, and model operations in more than 40 000 organisations that heavily rely on analytics in their operations. By unifying the entire data science cycle, RapidMiner is built on 5 core platforms and 3 automated data science products that help in the design and deployment of analytics processes. Their data exploration features such as visualisations and descriptive statistics will enable you to get the information you need while predictive analytics will help you in cases such as churn prevention, risk modelling, text mining, and customer segmentation.

With more than 1500 algorithms and data functions, support for 3rd party machine learning libraries, integration with Python or R, and advanced analytics, RapidMiner has developed into a data science platform for deep analytical purposes. Additionally, comprehensive tutorials and full automation, where needed, will ensure simplified processes if your company requires them, so you don’t need to perform manual analysis. All these positive traits have earned the tool a positive 4.4 stars rating on Capterra and 4.6 stars in G2Crowd. If you’re looking for analyst tools and software focused on deep data science management and machine learning, then RapidMiner should be high on your list.

15. DATA CLEANSING PLATFORMS

The amount of data being produced is only getting bigger, hence, the possibility of it involving errors. To help analysts avoid these errors that can damage the entire analysis process is that data cleansing solutions were developed. These tools help in preparing the data by eliminating errors, inconsistencies, and duplications enabling users to extract accurate conclusions from it. Before cleansing platforms were a thing, analysts would manually clean the data, this is also a dangerous practice since the human eye is prompt to error. That said, powerful cleansing solutions have proved to boost efficiency and productivity while providing a competitive advantage as data becomes reliable. The cleansing software we picked for this section is a popular solution named OpenRefine.

OpenRefine

KEY FEATURES:

Data explorer to clean “messy” data using transformations, facets, and clustering, among others

Transform data to the format you desire, for example, turn a list into a table by importing the file into OpenRefine

Includes a large list of extensions and plugins to link and extend datasets with various web services

Previously known as Google Refine, OpenRefine is a Java-based open-source desktop application for working with large sets of data that needs to be cleaned. The tool, with ratings of 4.0 stars in Capterra and 4.6 in G2Crowd, also enables users to transform their data from one format to another and extend it with web services and external data. OpenRefine has a similar interface to the one of spreadsheet applications and can handle CSV file formats, but all in all, it behaves more as a database. Upload your datasets into the tool and use their multiple cleaning features that will let you spot anything from extra spaces to duplicated fields.

Available in more than 15 languages, one of the main principles of OpenRefine is privacy. The tool works by running a small server on your computer and your data will never leave that server unless you decide to share it with someone else.

16. DATA MINING TOOLS

Next, in our insightful list of data analyst tools we are going to touch on data mining. In short, data mining is an interdisciplinary subfield of computer science that uses a mix of statistics, artificial intelligence and machine learning techniques and platforms to identify hidden trends and patterns in large, complex data sets. To do so, analysts have to perform various tasks including data classification, cluster analysis, association analysis, regression analysis, and predictive analytics using professional data mining software. Businesses rely on these platforms to anticipate future issues and mitigate risks, make informed decisions to plan their future strategies, and identify new opportunities to grow. There are multiple data mining solutions in the market at the moment, most of them relying on automation as a key feature. We will focus on Orange, one of the leading mining software at the moment.

ORANGE

KEY FEATURES:

Visual programming interface to easily perform data mining tasks via drag and drop

Multiple widgets offering a set of data analytics and machine learning functionalities

Add-ons for text mining and natural language processing to extract insights from text data

Orange is an open source data mining and machine learning tool that has existed for more than 20 years as a project from the University of Ljubljana. The tool offers a mix of data mining features, which can be used via visual programming or Python Scripting, as well as other data analytics functionalities for simple and complex analytical scenarios. It works under a “canvas interface” in which users place different widgets to create a data analysis workflow. These widgets offer different functionalities such as reading the data, inputting the data, filtering it, and visualising it, as well as setting machine learning algorithms for classification and regression, among other things.

What makes this software so popular amongst others in the same category is the fact that it provides beginners and expert users with a pleasant usage experience, especially when it comes to generating swift data visualisations in a quick and uncomplicated way. Orange, which has 4.2 stars ratings on both Capterra and G2Crowd, offers users multiple online tutorials to get them acquainted with the platform. Additionally, the software learns from the user’s preferences and reacts accordingly, this is one of their most praised functionalities.

17. Data visualisation platforms

Data visualisation has become one of the most indispensable elements of data analytics tools. If you’re an analyst, there is probably a strong chance you had to develop a visual representation of your analysis or utilise some form of data visualisation at some point. Here we need to make clear that there are differences between professional data visualisation tools often integrated through already mentioned BI tools, free available solutions as well as paid charting libraries. They’re simply not the same. Also, if you look at data visualisation in a broad sense, Excel and PowerPoint also have it on offer, but they simply cannot meet the advanced requirements of a data analyst who usually chooses professional BI or data viz tools as well as modern charting libraries, as mentioned. We will take a closer look at Highcharts as one of the most popular charting libraries on the market.

Highcharts

KEY FEATURES:

Interactive JavaScript library compatible with all major web browsers and mobile systems like Android and iOS

Designed mostly for a technical-based audience (developers)

WebGL-powered boost module to render millions of datapoints directly in the browser

Highcharts is a multi-platform library that is designed for developers looking to add interactive charts to web and mobile projects. With a promising 4.6 stars rating in Capterra and 4.5 in G2Crowd, this charting library works with any back-end database and data can be given in CSV, JSON, or updated live. They also feature intelligent responsiveness that fits the desired chart into the dimensions of the specific container but also places non-graph elements in the optimal location automatically.

Highcharts supports line, spline, area, column, bar, pie, scatter charts and many others that help developers in their online-based projects. Additionally, their WebGL-powered boost module enables you to render millions of datapoints in the browser. As far as the source code is concerned, they allow you to download and make your own edits, no matter if you use their free or commercial license. In essence, Basically, Highcharts is designed mostly for the technical target group so you should familiarise yourself with developers’ workflow and their JavaScript charting engine. If you’re looking for a more easy to use but still powerful solution, you might want to consider an online data visualisation tool like datapine.

3) Key Takeaways & Guidance

We have explained what are data analyst tools and given a brief description of each to provide you with the insights needed to choose the one (or several) that would fit your analytical processes the best. We focused on diversity in presenting tools that would fit technically skilled analysts such as R Studio, Python, or MySQL Workbench. On the other hand, data analysis software like datapine cover needs both for data analysts and business users alike so we tried to cover multiple perspectives and skill levels.

We hope that by now you have a clearer perspective on how modern solutions can help analysts perform their jobs more efficiently in a less prompt to error environment. To conclude, if you want to start an exciting analytical journey and test a professional BI analytics software for yourself, you can try datapine for a 14-day trial, completely free of charge and with no hidden costs.

Take advantage of modern BI software features today!